Why AI SaaS products have a price floor

Why serious AI SaaS products have a price floor

People like comparing AI SaaS pricing to ChatGPT. That comparison stops being useful as soon as the product is expected to do real work inside a business.

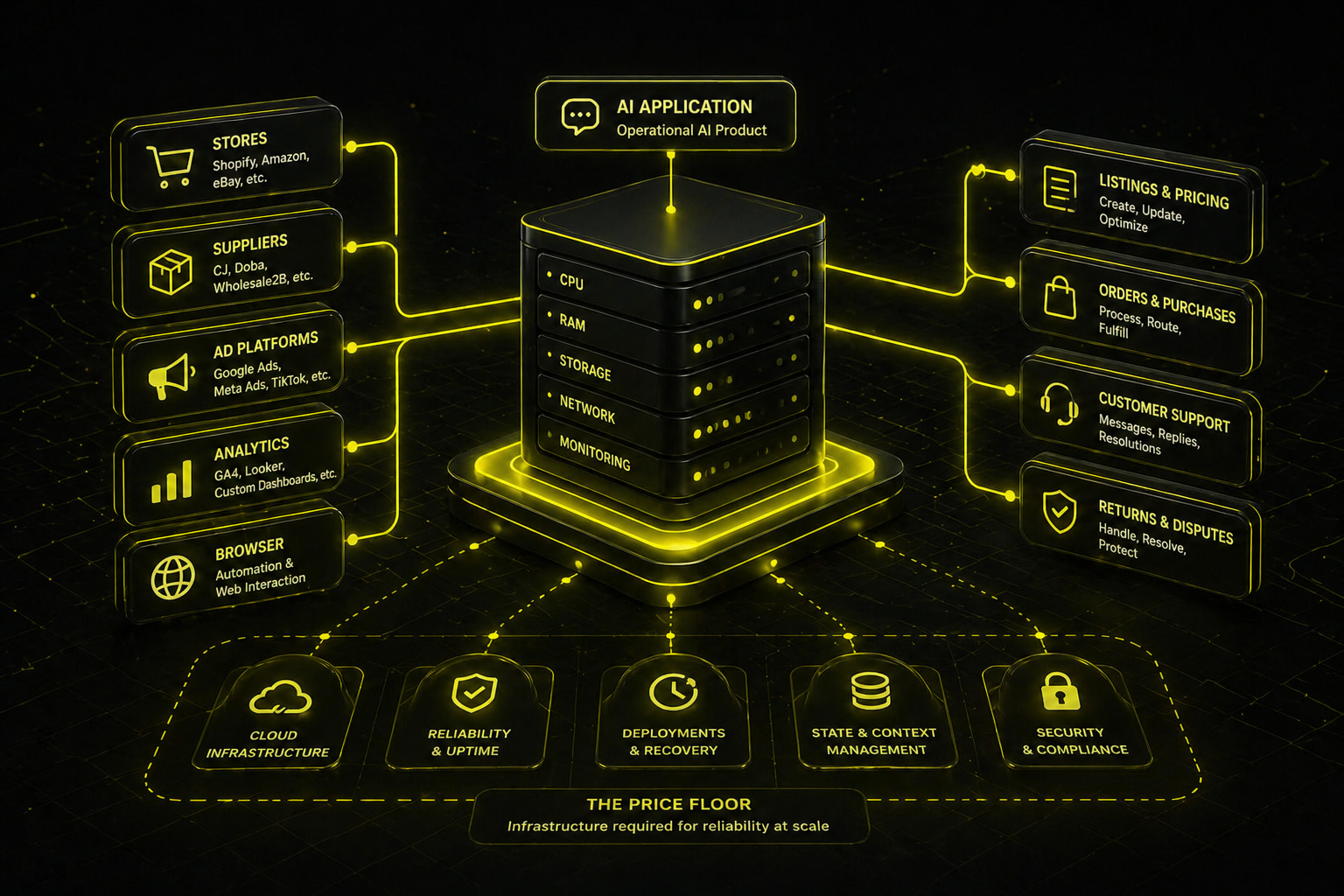

There is a practical price floor under that kind of software. It appears before model usage becomes the main question. Once an AI product has to connect to stores, supplier systems, ad platforms, analytics tools, and sometimes a browser, the cheapest setup is usually no longer the usable one.

That is the shift that makes pricing look strange from the outside.

A chat product can get away with being lightweight. An operational AI product cannot. It has to start cleanly, keep running, hold context, maintain connections, and return something useful without constant supervision. If it touches pricing, listings, supplier workflows, customer flows, or other parts of a business where mistakes cost money, reliability stops being a nice extra. It becomes part of the product itself.

This is where the lower end of AI SaaS pricing starts to disappear.

In practice, the first problem is often not inference cost. It is infrastructure reliability.

A small machine may be enough to prove that the system can run. That does not mean it is enough to run it well. The gap usually shows up during startup and deployment. The app launches too slowly, fails mid-deploy, times out while services initialize, or comes up in a half-working state. More RAM can help, but RAM is often only part of the story. CPU turns out to matter more than people expect, especially when the system has to boot multiple services, load dependencies, initialize browser tooling, restore state, and establish external connections.

We saw that in our own tests of an OpenClaw-based commerce agent on Fly.io. The smallest machine that could technically run the system was not the smallest machine that could launch it reliably. A shared-cpu-2x machine with 2 GB RAM could run, but deployments were unstable. Moving to 4 GB improved memory headroom, but startup was still inconsistent. Stable launches only showed up on shared-cpu-4x with 4 GB RAM, where startup settled at about three minutes in our tests.

That changed the economics immediately.

At that point, the baseline server cost was already around $24 per month without discounts, or about $16 with annual reservation, before model usage, storage, monitoring, support, logs, and the rest of the product were counted. Faster setups pushed that higher.

This is why the usual “but ChatGPT costs $20” benchmark creates more confusion than clarity.

ChatGPT is mostly sold as access to a model. A reliable AI SaaS product is sold as a working system. The customer is not paying only for tokens. They are paying for uptime, stable startup, integrations, state, error recovery, and the ability to trust the result enough to use it inside an actual workflow.

That difference matters even more when the product operates in a live business environment. If the system fails in a chat window, the user gets annoyed. If it fails while handling listings, pricing, ads, suppliers, or post-purchase operations, the business absorbs the damage. That is a different category of risk, and it forces a different category of infrastructure.

A simple rule helps here: if your AI product needs to do any of the following, it probably already has a price floor.

- Keep long-lived sessions or connections open

- Work across multiple external systems

- Use browser automation because direct APIs are missing or limited

- Handle sensitive operational data

- Recover cleanly after deploys or restarts

- Produce usable output without a human babysitting each run

Once those requirements appear, the minimum viable setup is no longer the cheapest one on the pricing page. It is the cheapest one that stays stable enough to trust.

That is what people often miss when they judge AI SaaS by how simple the interface looks. The interface may look like a chat box. The system behind it may look nothing like a chat subscription business.

The price floor is not set by design simplicity. It is set by the minimum infrastructure required to make the product dependable.

That is the real reason serious AI SaaS products often cost more than they first appear to. If you judge them as lightweight chat tools, they look expensive. If you judge them by what they are expected to do reliably, the pricing starts to make sense.